Imagine a bustling city seen from above at night. Roads glow like arteries, intersections pulse with movement, and the entire network behaves like one living organism. If you wanted to understand this city, you would not study a single street in isolation. You would observe how neighbourhoods influence one another, how traffic flows, and how signals travel across the map.

Graph Neural Networks (GNNs) work with data in a similar way. They process information not as disconnected points, but as networks of relationships. Nodes represent entities, edges represent interactions, and learning happens by exchanging information between connected elements.

Rather than treating data as a grid or a sequence, GNNs embrace the structure and organisation of graphs.

The Challenge of Graph-Structured Data

Traditional neural networks are built for orderly input. Convolutional networks expect images structured in pixel grids. Recurrent networks work with sequences. Yet many real-world systems are naturally irregular:

- Social networks

- Transportation systems

- Molecular structures

- Recommendation engines

- Knowledge graphs

In these domains, relationships matter more than isolated attributes. A molecule’s function depends on how atoms bond. A person’s influence emerges from the shape of their social connections. A product recommendation works because of shared preferences between users and items.

GNNs model these interdependencies. They treat the network of relationships as the primary context in which meaning is found.

Professionals expanding their understanding of relational learning often explore structured study, such as enrollment in an AI course in Mumbai, where modern architectures are examined in applied industry contexts.

Message Passing: How Information Moves Through the Graph

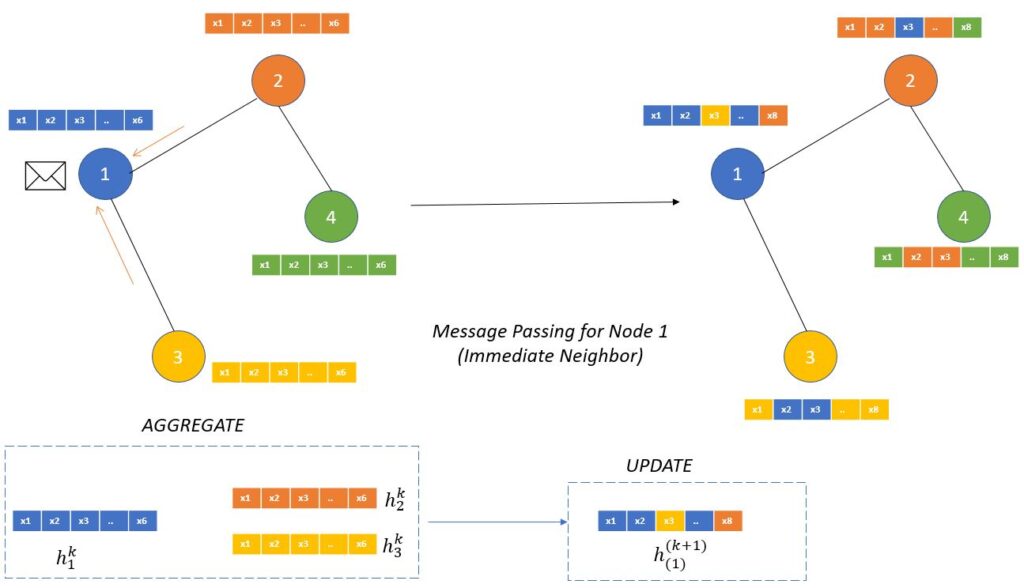

The core idea behind GNNs is message passing. Each node in the graph sends and receives information from its neighbours. With every round of communication, nodes update their internal representations.

Think of it like neighbourhood gossip:

- Each node has its own story.

- It listens to stories from nearby nodes.

- It revises its understanding based on these shared narratives.

In practice, this works in iterative steps:

- Message Generation

Each node creates a message using its features and potentially the features of the connecting edge. - Message Aggregation

A node collects incoming messages from all its neighbours. - Feature Update

The node updates its feature representation using the aggregated information.

This allows local information to flow outward through the network and global relationships to emerge. With enough message-passing steps, a node can learn about distant regions of the graph.

Feature Aggregation: Summarising Neighbourhood Knowledge

Aggregation is the process of combining messages from surrounding nodes. Since graphs have varying numbers of neighbours, aggregation functions must be order-independent. The most common choices are:

- Sum

- Mean

- Max pooling

For example, if we consider a social network, summarising the characteristics of a person’s connections can reveal:

- Their community

- Their influence ranking

- Their behavioural patterns

Aggregation is what allows GNNs to remain flexible and scalable. It ensures that graphs of different shapes and sizes can be processed with the same model architecture.

Architectural Variants of GNNs

Multiple architectures are built on the foundational message-passing framework:

Graph Convolutional Networks (GCNs)

They apply convolution-like operations to graph structures, smoothing information across nodes.

Graph Attention Networks (GATs)

They introduce attention mechanisms to assign different weights to different neighbours, letting the model focus on the most relevant relationships.

GraphSAGE

This architecture samples and aggregates information from neighbourhoods, making training scalable on very large graphs.

Each architecture represents a different strategy for deciding how influence flows through the network.

Real-World Applications: Meaning Beyond Structure

GNNs are rapidly transforming multiple domains:

- Drug discovery identifies molecular interactions faster than laboratory testing.

- Fraud detection systems map suspicious transaction networks.

- Recommendation engines analyse user-item relationships with higher personalisation.

- Urban planning models traffic dynamics for smarter city design.

Learners who want to apply these ideas practically often pursue structured learning pathways such as an AI course in Mumbai, where graph-based reasoning is tied to industry case work in areas such as finance, healthcare, and logistics.

Conclusion

Graph Neural Networks mark a shift in how we understand data. Instead of forcing information into rigid shapes, they embrace complexity. They learn from relationships, neighbourhoods, and connectivity. Through message passing and feature aggregation, GNNs create representations that reflect how entities influence one another.

In a world shaped by networks, from biology to business to communication, the ability to learn from graphs is becoming essential. GNNs help us move from isolated data points to meaningful, interconnected understanding, revealing patterns that would otherwise remain hidden within the web of relationships.